A new way to train AI systems could keep them safer from hackers

The context: One of the greatest unsolved flaws of deep learning is its vulnerability to so-called adversarial attacks. When added to the input of an AI system, these perturbations, seemingly random or undetectable to the human eye, can make things go completely awry. Stickers strategically placed on a stop sign, for example, can trick a self-driving car into seeing a speed limit sign for 45 miles per hour, while stickers on a road can confuse a Tesla into veering into the wrong lane.

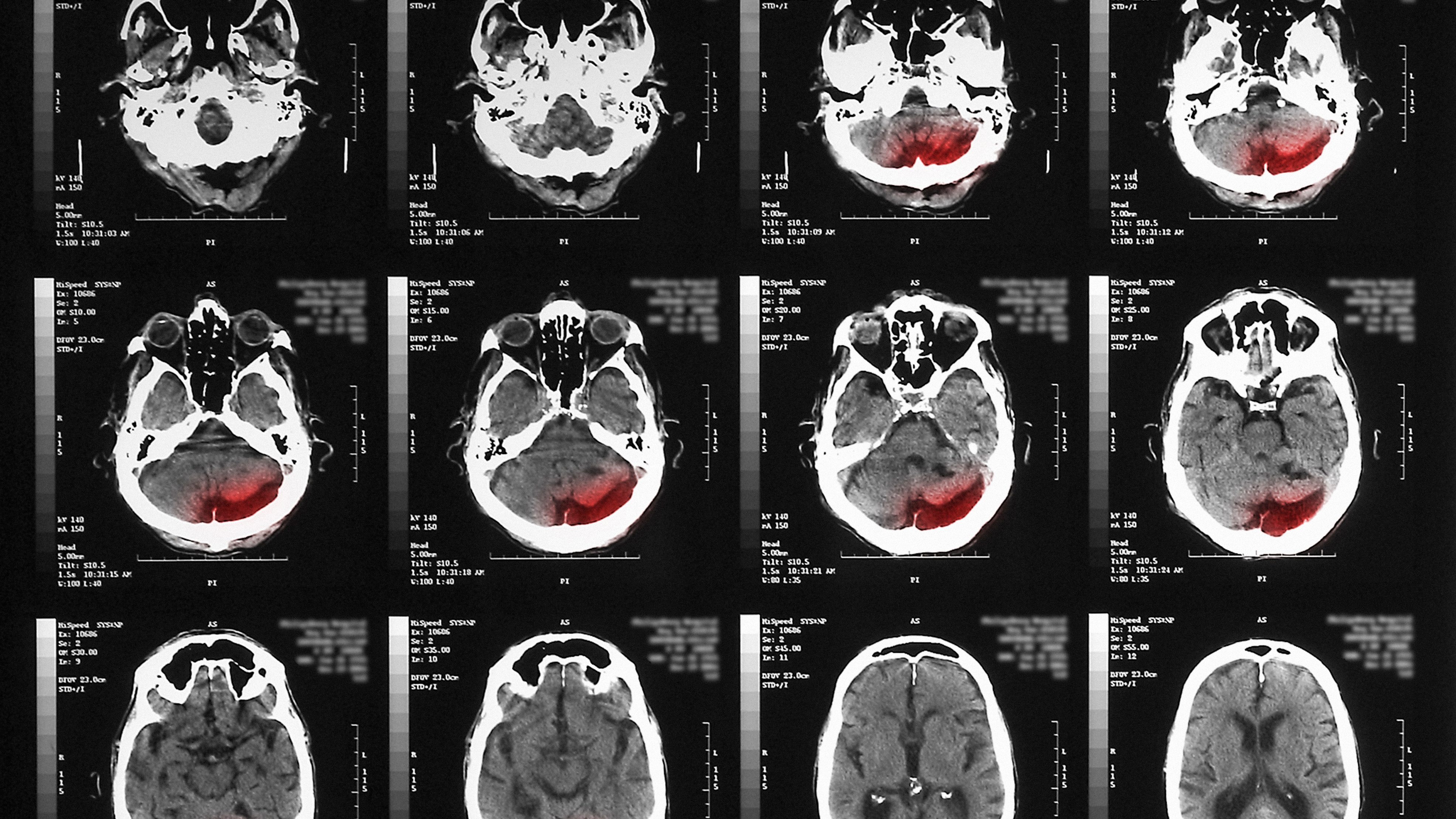

Safety critical: Most adversarial research focuses on image recognition systems, but deep-learning-based image reconstruction systems are vulnerable too. This is particularly troubling in health care, where the latter are often used to reconstruct medical images like CT or MRI scans from x-ray data. A targeted adversarial attack could cause such a system to reconstruct a tumor in a scan where there isn’t one.

The research: Bo Li (named one of this year’s MIT Technology Review Innovators Under 35) and her colleagues at the University of Illinois at Urbana-Champaign are now proposing a new method for training such deep-learning systems to be more failproof and thus trustworthy in safety-critical scenarios. They pit the neural network responsible for image reconstruction against another neural network responsible for generating adversarial examples, in a style similar to GAN algorithms. Through iterative rounds, the adversarial network attempts to fool the reconstruction network into producing things that aren’t part of the original data, or ground truth. The reconstruction network continuously tweaks itself to avoid being fooled, making it safer to deploy in the real world.

The results: When the researchers tested their adversarially trained neural network on two popular image data sets, it was able to reconstruct the ground truth better than other neural networks that had been “fail-proofed” with different methods. The results still aren’t perfect, however, which shows the method still needs refinement. The work will be presented next week at the International Conference on Machine Learning. (Read this week’s Algorithm for tips on how I navigate AI conferences like this one.)

Deep Dive

Artificial intelligence

Google DeepMind used a large language model to solve an unsolved math problem

They had to throw away most of what it produced but there was gold among the garbage.

Unpacking the hype around OpenAI’s rumored new Q* model

If OpenAI's new model can solve grade-school math, it could pave the way for more powerful systems.

Finding value in generative AI for financial services

Financial services firms have started to adopt generative AI, but hurdles lie in their path toward generating income from the new technology.

Google DeepMind’s new Gemini model looks amazing—but could signal peak AI hype

It outmatches GPT-4 in almost all ways—but only by a little. Was the buzz worth it?

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.